Computer vision already runs elite sports. It's about to run the rec league too.

Twelve-camera tracking rigs and Hawk-Eye are infrastructure at the top. The same capability now fits on a $249 board and a commodity camera. What that unlocks across every sport — and why the smartest way in is the narrowest one.

There's a measurement gap in sports, and I've spent enough time building on the wrong side of it to think it's about to close fast.

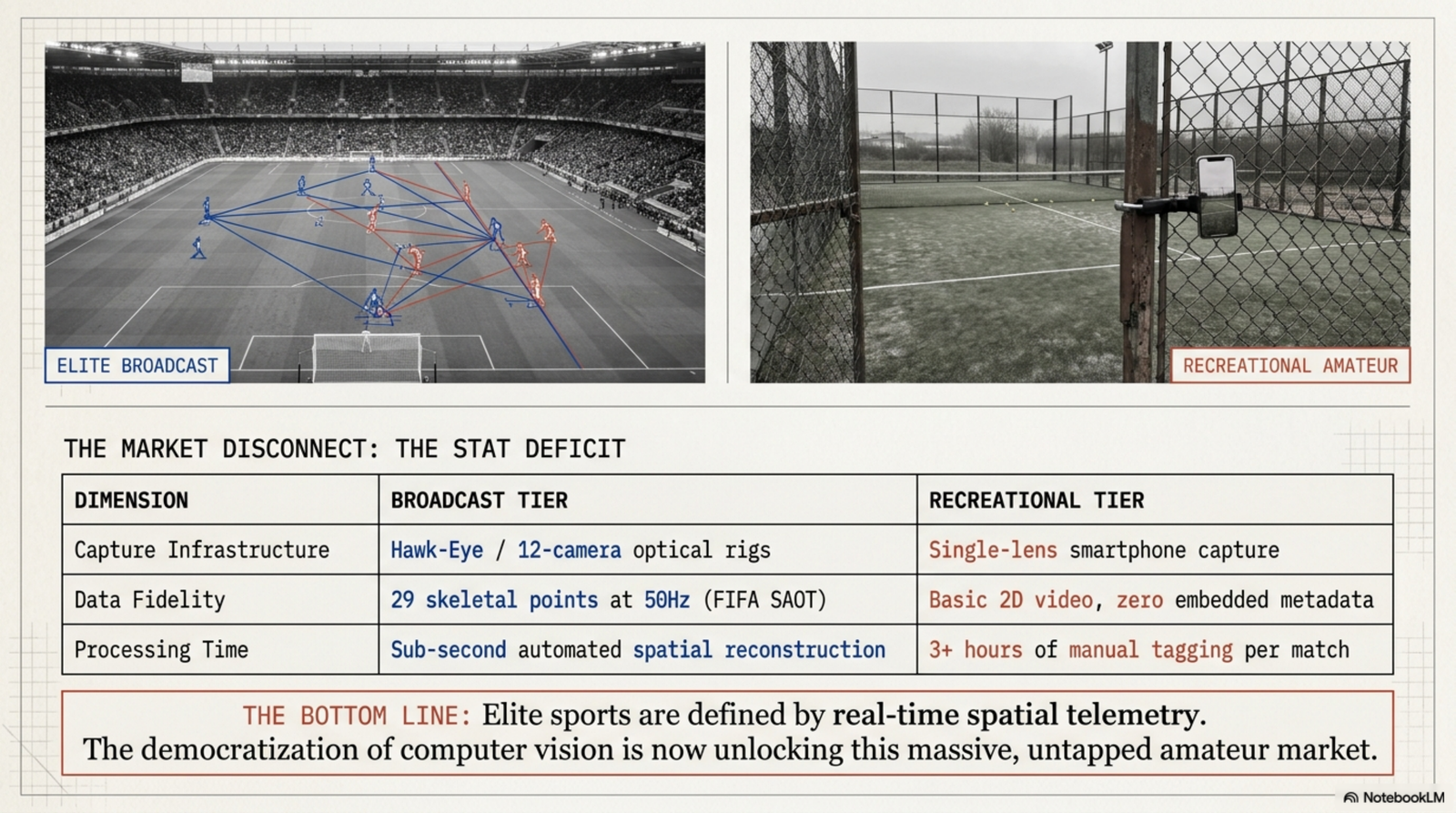

At the elite level, computer vision isn't a feature anymore — it's plumbing. FIFA's semi-automated offside system runs twelve tracking cameras and follows up to twenty-nine points on every player, fifty times a second. Major League Baseball rebuilt Statcast on Hawk-Eye optical tracking and runs it across every stadium. Nobody at that level argues about whether the technology works. It just runs.

Drop down one rung — to the academy, the league night, the weekend tournament — and it falls off a cliff. In most amateur programs, as one coaching write-up put it, player updates happen "in bits and pieces, some clips here, a few messages there." A computer-vision firm that spent seven months building analytics for a padel platform described the starting point bluntly: the client had cameras on every court and still couldn't automate anything — every match report was done by hand, up to three hours per game.

That's the gap. Elite sport is measured continuously and automatically. Everyone else is measured by memory and a phone full of clips. And the thing about to collapse it isn't one product — it's a stack of capability that all matured at roughly the same time.

Why the gap is closing now

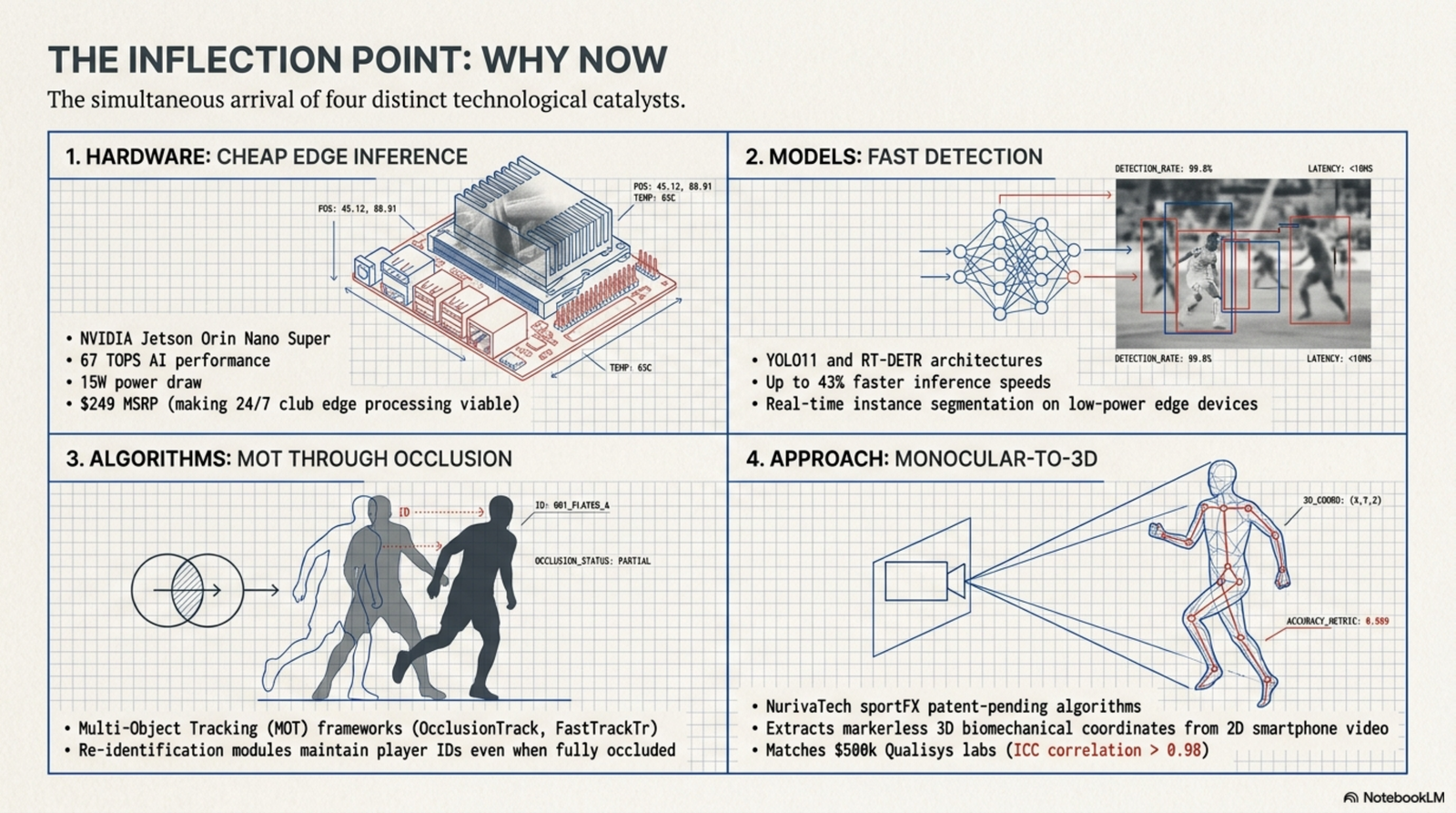

Four things changed in about three years, and you need all four for this to work.

Monocular-to-3D got real. You no longer need a multi-camera rig or wearable sensors to get spatial data. One system, NurivaTech's sportFX, claims a smartphone-only pipeline returning biomechanical measurements that correlate above 0.98 against a half-million-dollar marker-based motion-capture lab. Whether or not that exact figure holds, the direction is unmistakable: a single commodity camera is becoming a real sensor.

Tracking learned to survive occlusion. The hard problem in any multi-object scene is what happens when things overlap. Recent trackers — OcclusionTrack with its confidence-based Kalman filtering and depth-cascade matching, transformer-based trackers like FastTrackTr — are built specifically to hold identity through the mess and recover after a full occlusion. That's the difference between a demo and a system.

Detection models got fast and cheap enough. The YOLO line and its successors will now locate small, fast objects and bodies simultaneously, in real time, on hardware you can actually afford.

And the compute moved to the edge. An NVIDIA Jetson Orin Nano Super is $249, draws 15 watts, and runs a small detection model north of 100 frames per second — locally, no cloud, no uplink dependency. That last point matters more than it sounds: a system that has to phone home isn't a system a venue or a consumer can rely on.

What it actually unlocks

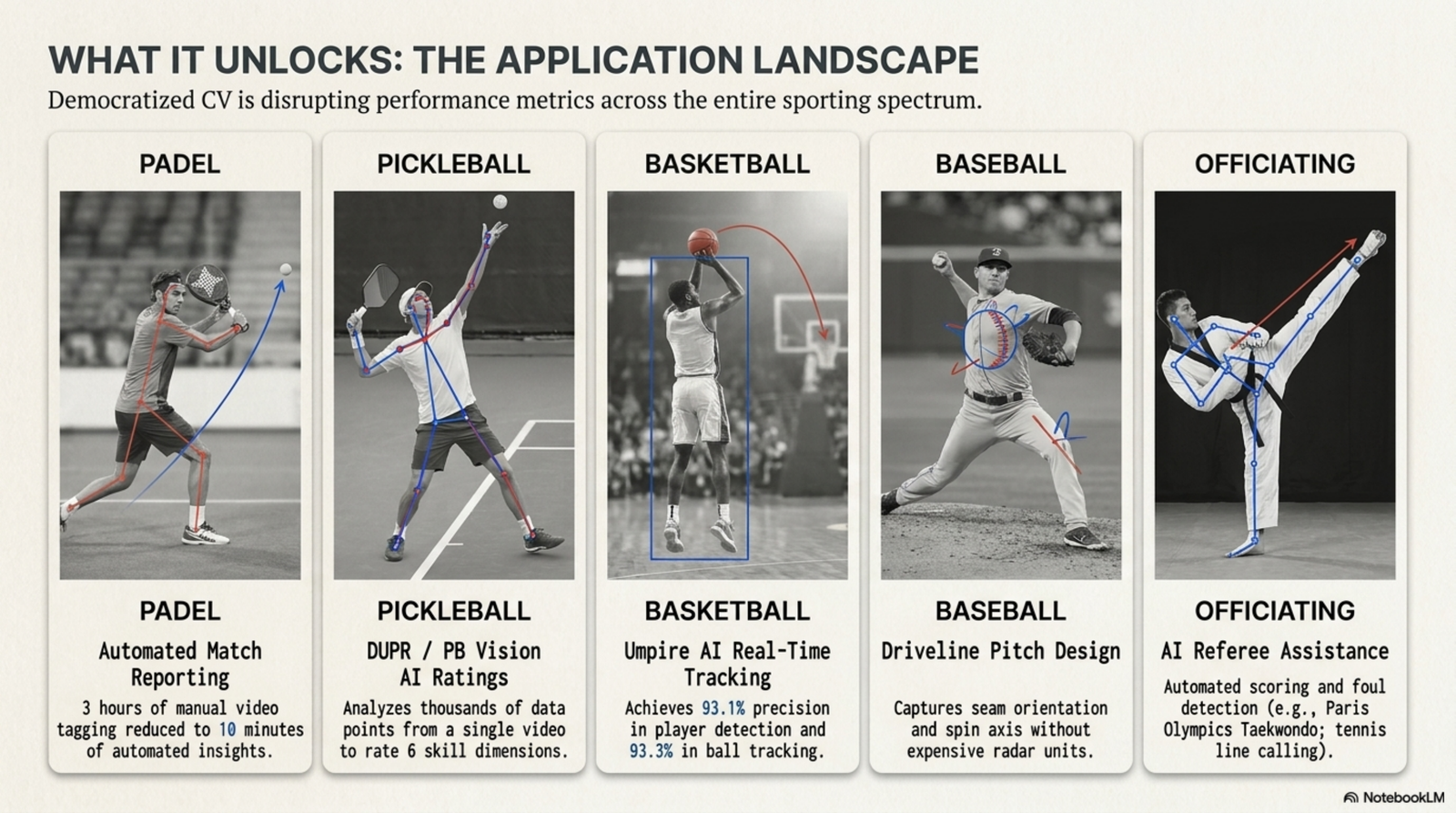

The reason this is a category and not a product: the same capability stack lands in sport after sport.

In padel, that seven-month build ended with 95% tracking accuracy and match reports in ten minutes instead of three hours. In pickleball, the DUPR rating system partnered with PB Vision to generate a player rating from a single uploaded phone video — shot quality, movement, court coverage, across six skill dimensions, no tournament required. In basketball, an "Umpire AI" research system hit 93% precision on both player and ball detection and automated possession logic on top of it. In baseball, Driveline is using vision to pull pitch spin axis and seam orientation off ordinary video — bringing Trackman-class pitch design to a high-school bullpen. The US Ski and Snowboard team is working with Google on markerless motion capture through bulky winter gear, replacing sensor suits that fail in the cold. And on the officiating side, electronic line-calling is moving from Grand Slam show courts to local clubs, while an AI taekwondo review system at the Paris Olympics processed head-strike reviews in three to nine seconds — roughly 80% faster than the human process.

None of those is the same sport. All of them are the same bet.

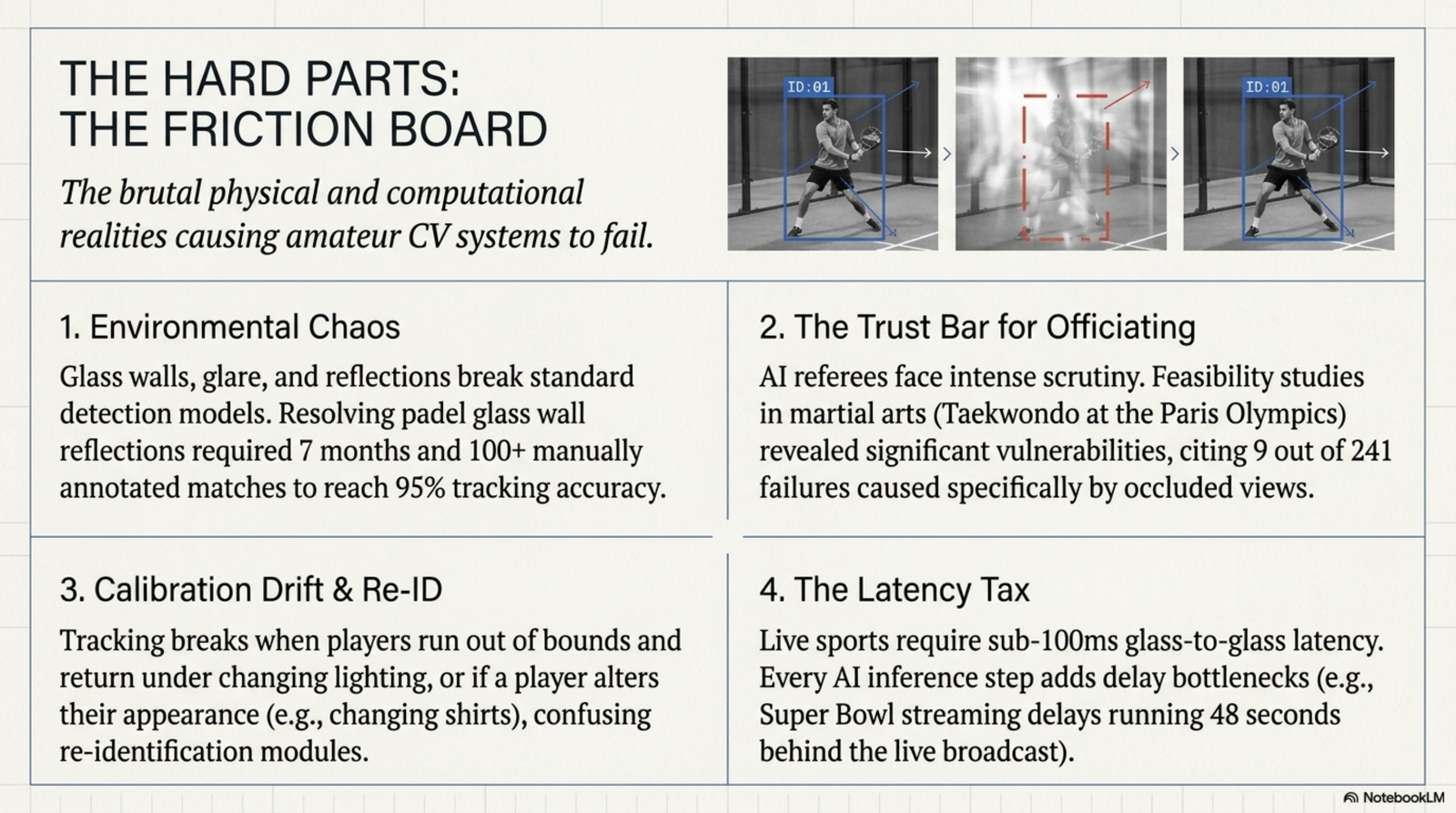

The parts that are still genuinely hard

I want to be honest about this, because the failure modes are where most of these projects actually live.

Vision breaks when the world stops being consistent. That padel team's models broke immediately on the glass walls — reflections created ghost detections, glare shifted with the time of day. When one player took his shirt off mid-match, the re-identification module failed completely. These aren't edge cases you patch later; they're the work.

Calibration drifts. Every one of these systems maps camera pixels to real-world geometry, and that map is fragile. Bump the tripod, change the height, and you re-calibrate — or every downstream number is quietly wrong.

The trust bar is brutal. A scoring system isn't graded on average accuracy; it's graded on disputes. That taekwondo AI matched international referees on the vast majority of calls — and still diverged on nine out of 241, nearly every one an occlusion or a blocked-view case. A system that's 96% right is, to the person on the wrong end of the other 4%, a dispute generator. And people are measurably slower to trust a machine's call than a human's, even when the machine is better.

Latency is real. During Super Bowl LX, the best streaming platform still ran 48 seconds behind broadcast. Anything that wants to feel live has to win that fight locally.

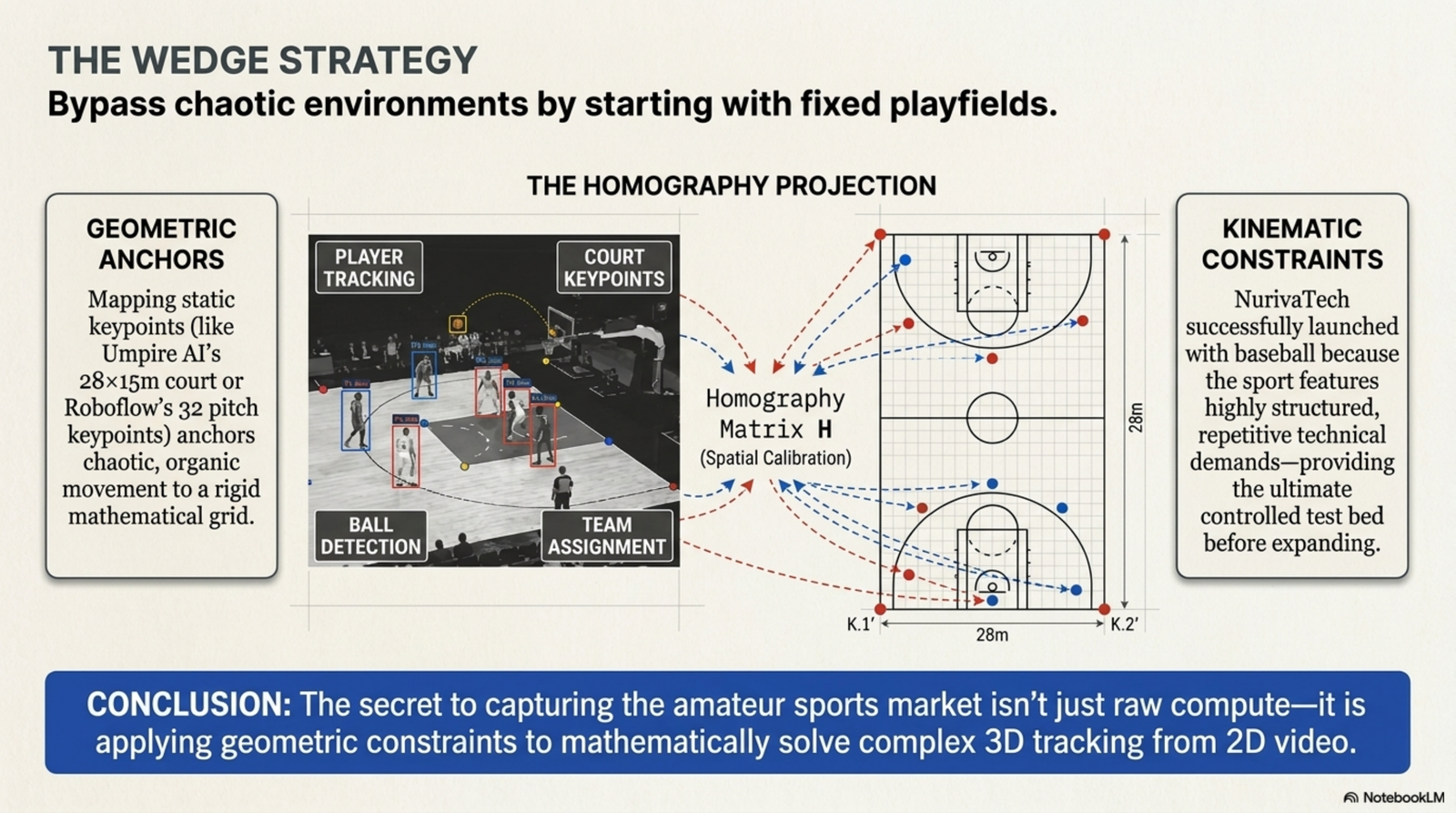

The wedge: start with a fixed playfield

Here's the part I'm most confident about, because it's where the math actually helps you.

The hardest version of this problem is an open, chaotic field. The easiest version is a fixed playfield with rigid, known geometry — because then you can lean on homography: a clean mathematical map from camera pixels to a known real-world plane. Umpire AI does it by mapping to a standard 28-by-15-meter court. A Roboflow soccer pipeline does it with 32 fixed pitch keypoints. Lock the geometry down and you've deleted an entire class of variable — which lets you spend your effort on the genuinely hard problems (small-object tracking, occlusion, the trust bar) instead of fighting the environment.

That's why the smart way into this market is the narrowest one. You don't open with "computer vision for all of sport." You pick one sport with a fixed playfield and a simple, unambiguous rule set, you get it genuinely right — referee-grade, dispute-proof — and then you generalize. NurivaTech said it cleanly about why they started with baseball: "its technical demands make it the ultimate test, and mastering it means we have the foundation for every sport."

Where this goes

The elite layer of sport has been measured, tracked, and automatically scored for over a decade. Everyone else has been waiting on four curves to cross — model quality, tracking robustness, monocular 3D, and cheap edge compute. They've crossed.

The next few years won't be about whether a camera can score a recreational game. They'll be about who picks the right wedge, clears the trust bar, and earns the right to generalize. That's the whole game now — and it's a much narrower door than "AI for sports" makes it sound.